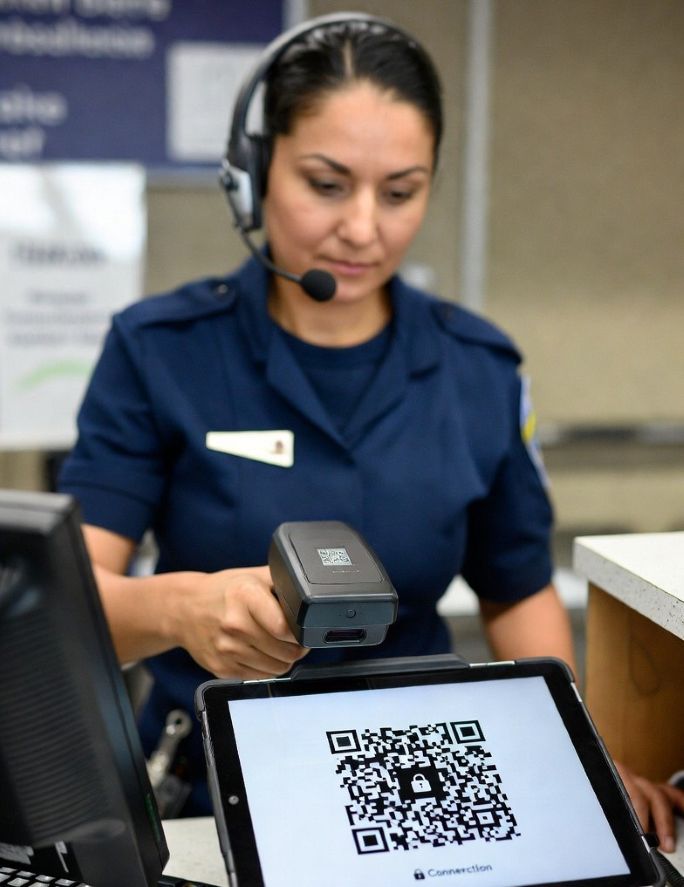

For decades, competitive games have relied on Elo-style ranking systems to measure skill, and some players even seek match help to improve results. Whether you’re playing chess, CS2, or grinding FACEIT, your rank is represented by a number that goes up when you win and down when you lose. It’s simple, widely understood, and effective to a point.

But as competitive gaming evolves, so do its challenges. Issues such as boosting, smurfing, account sharing, and a lack of transparency continue to affect ranking integrity. This has led to growing interest in alternative systems, particularly those inspired by blockchain technology. Instead of relying solely on Elo, what if player performance, reputation, and progression were tracked through tokens and decentralized systems? This idea may sound futuristic, but it introduces some compelling possibilities.

The Limits of Traditional Elo Systems

Elo works well for measuring win-loss outcomes, but it has limitations. It reduces performance into a single number, often ignoring context such as individual contribution, consistency, or long-term behavior.

In platforms like FACEIT, this can lead to common frustrations:

- Players are being carried to higher ranks.

- Smurfs disrupting matchmaking

- Boosted accounts that don’t reflect actual skill

- Limited transparency on how rankings are calculated

Because Elo is centralized and controlled by a single platform, players must trust that the system is fair, even when it feels inconsistent.

What Blockchain Brings to the Table

Blockchain technology is built around transparency, immutability, and decentralization. Every transaction or record is stored in a way that cannot easily be altered, and data is often publicly verifiable.

Applied to competitive gaming, this means:

- Match histories that cannot be modified or hidden

- Transparent ranking calculations

- Player identities that are harder to fake or share

- A system that does not rely on a single controlling authority

These principles open the door to more trustworthy and flexible ranking systems.

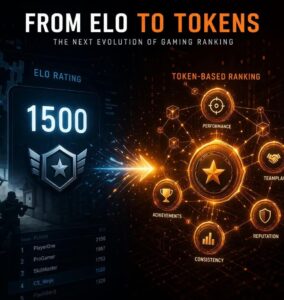

From Elo to Token-Based Systems

Instead of a single Elo number, a blockchain-inspired system could use tokens to represent different aspects of a player’s performance and reputation.

Think of tokens as digital units that reflect value. In gaming, this value could be tied to skill, consistency, teamwork, or achievements.

For example, a player might earn:

- Performance tokens for strong individual play

- Consistency tokens for maintaining steady results over time

- Teamplay tokens for effective coordination and support

- Reputation tokens for positive behavior and fair play

Rather than collapsing everything into a single number, this system creates a multidimensional profile of a player.

Proof of Skill Instead of Just Wins

One of the most interesting ideas borrowed from blockchain is the concept of “proof.” In crypto, systems often rely on proof-of-work or proof-of-stake. In gaming, this could translate into proof of skill.

Instead of ranking players purely on wins and losses, the system could verify and reward actual performance within matches. This includes:

- Impactful plays

- Objective contributions

- Decision-making quality

- Consistency across games

Because these records are stored in a transparent and tamper-resistant way, it becomes much harder to artificially inflate rank through boosting or account sharing.

Reducing Boosting and Smurfing

Boosting exists because Elo can be manipulated. A higher-skilled player can carry a lower-skilled account, artificially increasing its rank. Once the boost ends, the account no longer reflects the player’s true ability.

A token-based system makes this harder in several ways. First, performance-based tokens would require consistent individual contribution. Simply winning games would not be enough if the player’s actual impact is low.

Second, decentralized identity systems could tie accounts to unique credentials, making account sharing more difficult.

Third, because historical data is immutable, unusual patterns, such as sudden spikes in performance, are easier to detect and flag. Together, these mechanisms create a system where shortcuts are less effective and long-term consistency is rewarded.

New Incentives for Players

Another advantage of token-based systems is the ability to align incentives more effectively. Instead of focusing only on winning, players are encouraged to improve across multiple dimensions.

For example:

- A player might focus on improving communication to earn teamplay tokens.

- Another might work on consistency to maintain performance rewards.

- Positive behavior could be rewarded, reducing toxicity.

In some models, tokens could even have real-world value or be used within the platform for rewards, tournaments, or access to higher-level matches. This shifts the focus from simply climbing the ranks to building a well-rounded player profile.

Challenges and Considerations

While the idea is promising, it’s not without challenges. Complexity is a major concern. Elo is easy to understand, while token-based systems can be harder to explain and adopt. Players may prefer simplicity over detailed breakdowns.

There’s also the issue of data accuracy. Measuring performance in a fair and meaningful way requires advanced analytics and careful design. Poor implementation could lead to new forms of exploitation. Finally, integration with existing platforms would require significant changes. Most competitive systems today are deeply tied to traditional ranking models.

What This Means for the Future of Competitive Gaming

The shift from Elo to token-inspired systems doesn’t mean Elo will disappear anytime soon. It still serves as a solid foundation for ranking players. However, the future may involve hybrid systems that combine the simplicity of Elo with the depth and transparency of blockchain concepts.

We may see:

- More detailed performance tracking alongside rank

- Verifiable player histories that carry across platforms

- New reward systems that go beyond wins and losses

As competitive gaming continues to grow, the demand for fairness, transparency, and meaningful progression will only increase.

Final Thoughts

Elo has defined competitive ranking for years, but it was never designed to handle the complexity of modern online gaming. Issues such as boosting, smurfing, and a lack of transparency highlight the need for new approaches.

Blockchain-inspired systems offer a different perspective. By focusing on transparency, verifiable performance, and multidimensional rewards, they create a framework that could make competitive play fairer and more engaging.

While still evolving, the idea of moving from Elo to tokens represents a shift in how we think about skill, progress, and integrity in gaming. Instead of relying on a single number, players may one day be defined by a richer, more accurate reflection of their true performance.

For decades, competitive games have relied on Elo-style ranking systems to measure skill, and some players even seek match help to improve results. Whether you’re playing chess, CS2, or grinding FACEIT, your rank is represented by a number that goes up when you win and down when you lose. It’s simple, widely understood, and effective to a point.

For decades, competitive games have relied on Elo-style ranking systems to measure skill, and some players even seek match help to improve results. Whether you’re playing chess, CS2, or grinding FACEIT, your rank is represented by a number that goes up when you win and down when you lose. It’s simple, widely understood, and effective to a point.

Reddit remains home to some of the sharpest technical minds in blockchain. Communities like r/ethdev, r/cryptodevs, and r/web3 regularly unpack zero-knowledge proofs, layer-2 scaling designs, and peer-to-peer protocols. Yet many solid projects launch threads that go nowhere. A well-researched post can sit at zero comments for hours and vanish from sight.

Reddit remains home to some of the sharpest technical minds in blockchain. Communities like r/ethdev, r/cryptodevs, and r/web3 regularly unpack zero-knowledge proofs, layer-2 scaling designs, and peer-to-peer protocols. Yet many solid projects launch threads that go nowhere. A well-researched post can sit at zero comments for hours and vanish from sight. When most people hear “crypto,” they think about Bitcoin, trading, or digital money. But cryptocurrency is only one part of a much larger technology known as blockchain. The real innovation lies in how this technology records, stores, and protects data. Beyond finance, it has opened doors for improving data security, transparency, and trust—three things that are essential for online reputation management.

When most people hear “crypto,” they think about Bitcoin, trading, or digital money. But cryptocurrency is only one part of a much larger technology known as blockchain. The real innovation lies in how this technology records, stores, and protects data. Beyond finance, it has opened doors for improving data security, transparency, and trust—three things that are essential for online reputation management.